Phantm.

Route. Optimize. Track.

The only LLM gateway that optimizes AI spend via a proprietary routing engine saving $Ms

Optimization is a pipeline, not a mystery.

Seeing your LLM bill spike?

Phantm is a drop-in replacement for your LLM API calls that reduces token usage in real time while maintaining response quality. No workflow changes required.

No black-box behavior. Full guardrails. Production-safe optimization for agentic systems.

If you're running agent workflows and watching token spend climb, Phantm keeps costs under control without degrading outputs.

See what Phantm does to your prompt.

Paste any prompt. Watch the optimization pipeline activate in real-time.

This demo runs on OpenAI. Phantm also supports Anthropic, Gemini, and any OpenAI-compatible provider in production.

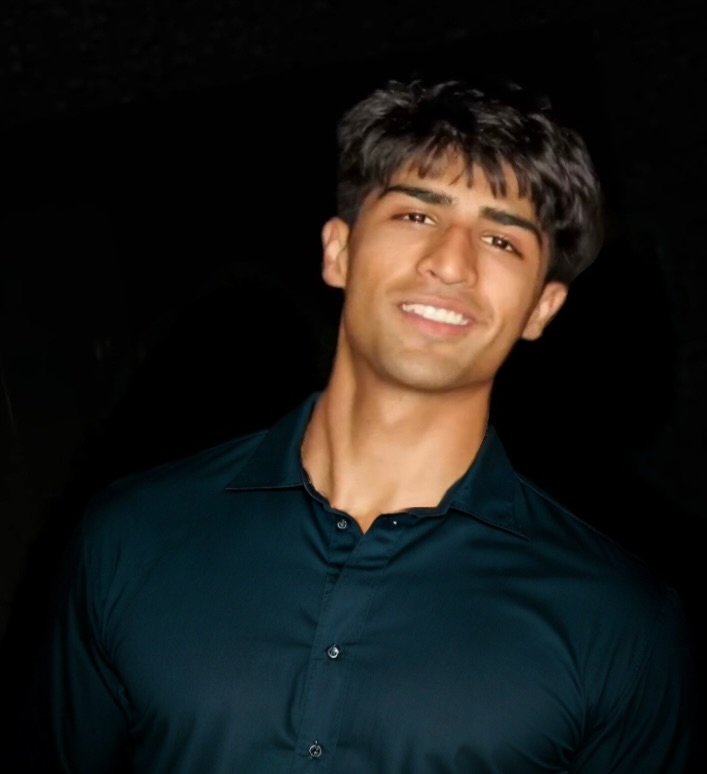

Meet the team.

-

Owns pilots: outreach, qualification, closing

Owns pilots: outreach, qualification, closing

-

Runs product testing + customer proof artifacts

Runs product testing + customer proof artifacts

-

Research experience in NN fine-tuning + simulations; helped secure ~$2M Lily grant

Research experience in NN fine-tuning + simulations; helped secure ~$2M Lily grant

-

Architect: leads product and system development

Architect: leads product and system development

-

Experience building predictive systems

Experience building predictive systems

-

International Math + Physics Olympian

International Math + Physics Olympian